the yt project's blog

© 2023 yt project blog

© 2023 yt project blog

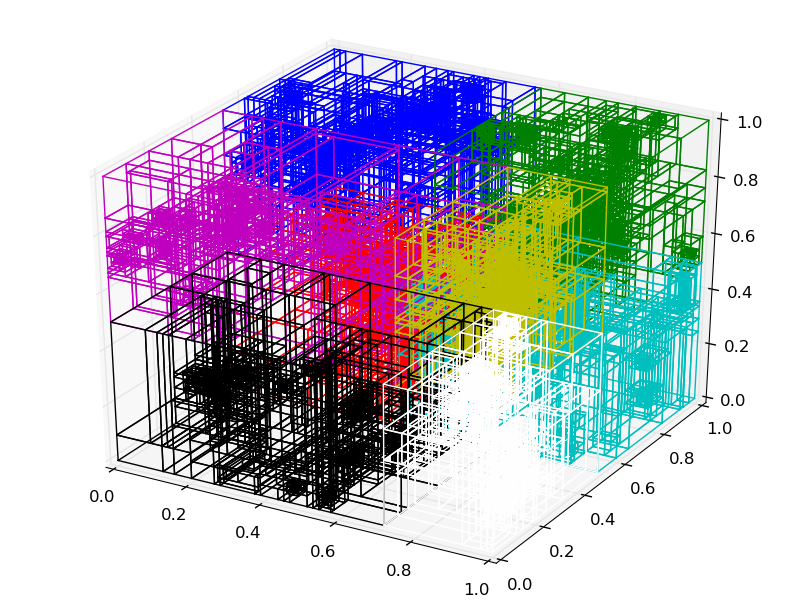

Let’s talk about the progress we’ve made on a kD-Tree decomposition.

Want to contribute a post? Check out our contributor guide

Check out our documentation here

The yt-project homepage

Our quickstart notebooks on getting started

The yt source repository

yt has many extension packages to help you in your scientific workflow! Check these out, or create your own.

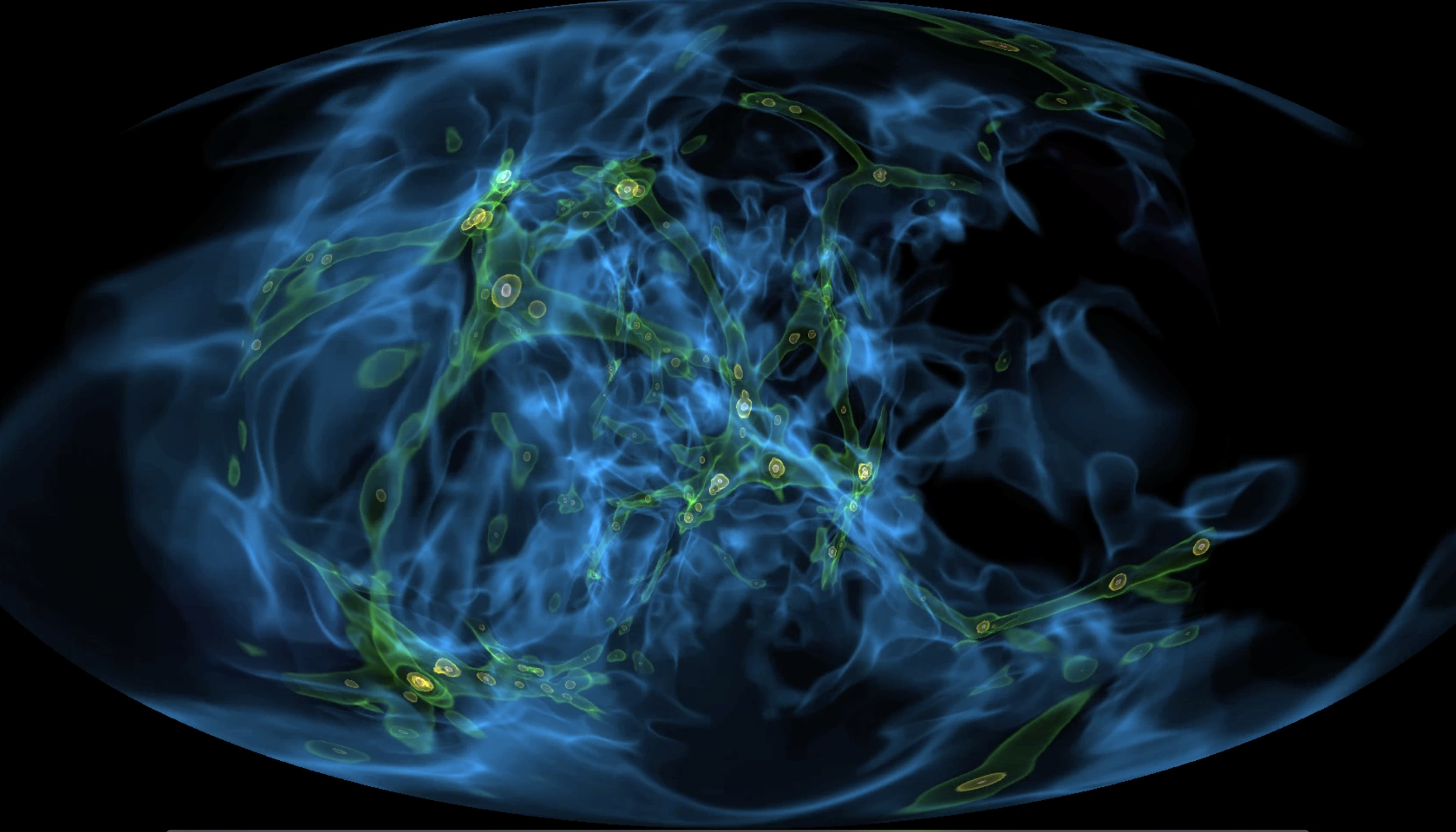

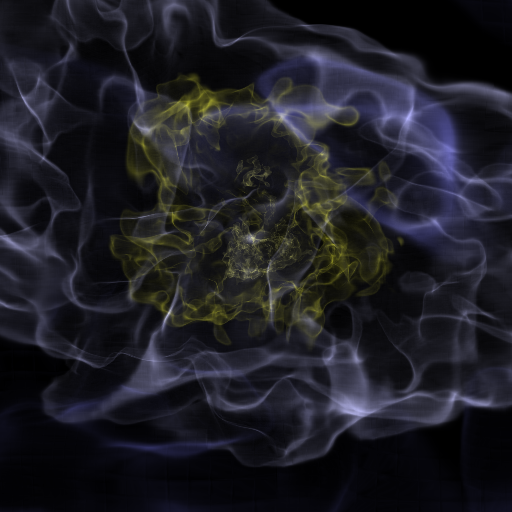

ytini is set of tools and tutorials for using yt as a tool inside the 3D visual effects software Houdini or a data pre-processor externally to Houdini.

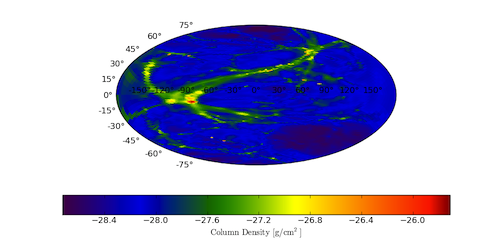

Trident is a full-featured tool that projects arbitrary sightlines through astrophysical hydrodynamics simulations for generating mock spectral observations of the IGM and CGM.

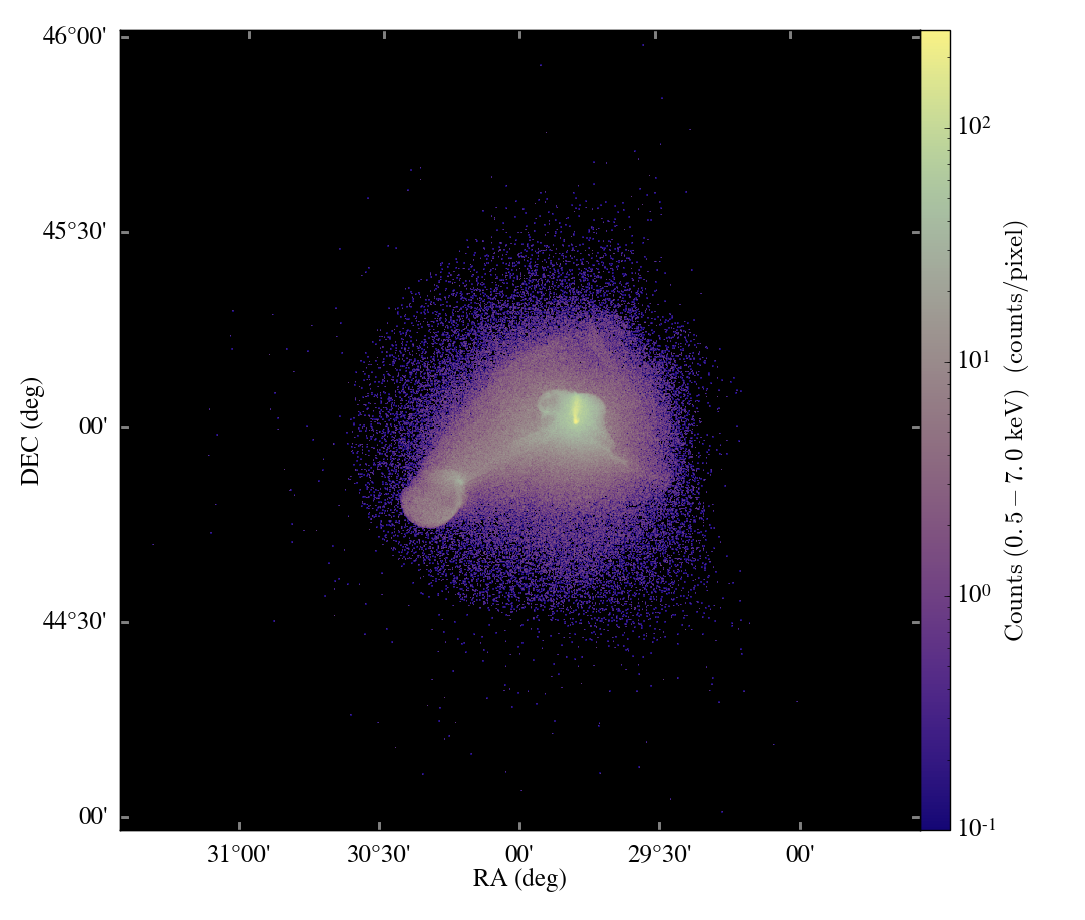

pyXSIM is a Python package for simulating X-ray observations from astrophysical sources.

Analyze merger tree data from multiple sources. It’s yt for merger trees!

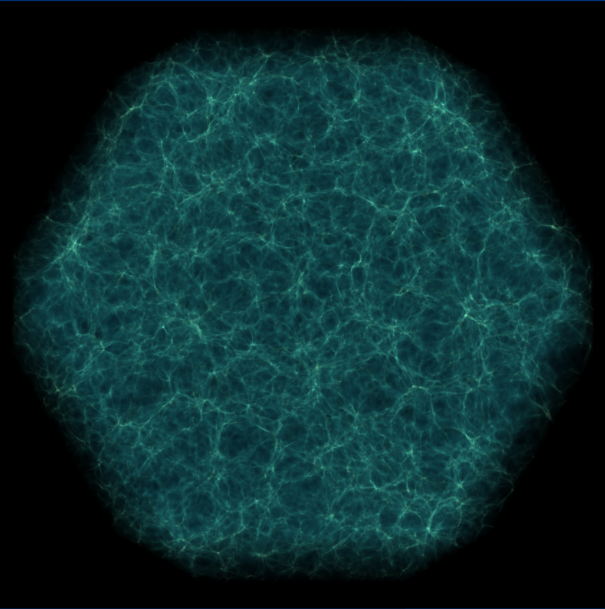

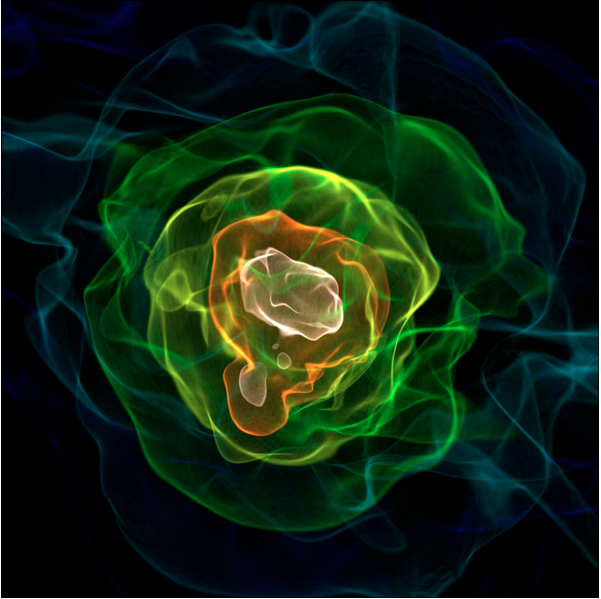

yt_idv is a package for interactive volume rendering with yt! It provides interactive visualization using OpenGL for datasets loaded in yt. It is written to provide both scripting and interactive access.

widgyts is a jupyter widgets extension for yt, backed by rust/webassembly to allow for browser-based, interactive exploration of data from yt.

yt_astro_analysis is the yt extension package for astrophysical analysis.

Finally, check out our development docs on writing your own yt extensions!

Are you interested in contributing to the yt blog?

Check out our post on contributing to the blog for a guide!

We welcome contributions from all members of the yt community. Feel free to reach out if you need any help.

The yt hub at https://girder.hub.yt/ has a ton of resources to check out, whether you have yt installed or not.

The collections host all sorts of data that can be loaded with yt. Some have been used in publications, and others are used as sample frontend data for yt. Maybe there’s data from your simulation software?

The rafts host the yt quickstart notebooks, where you can interact with yt in the browser, without needing to install it locally. Check out some of the other rafts too, like the widgyts release notebooks – a demo of the widgyts yt extension pacakge; or the notebooks from the CCA workshop – a user’s workshop on using yt.